In 2026, the ability to detect if an article was written by AI has become an essential skill. Every day, millions of texts generated by Claude, Gemini, and ChatGPT flood the internet, from blog articles to academic reports. The challenge is that these models become increasingly sophisticated, making their content practically indistinguishable from human writing.

This practical guide will teach you specific techniques to identify the origin of an article, whether generated by Claude, Gemini, or ChatGPT. You don’t need to be technical or an AI expert: you’ll simply follow concrete steps, use real tools, and learn to recognize characteristic patterns of each model.

By the end, you’ll know exactly how to validate the authenticity of the content you consume and protect your research from potentially biased or fabricated information.

| Aspect | ChatGPT | Claude | Gemini |

|---|---|---|---|

| Detection Difficulty | Moderate | Difficult | Very Difficult |

| Writing Pattern | Structured, repetitive | Natural, conversational | Variable, adaptive |

| Effective Tools | Originality.AI, Winston AI | Content at Scale, Turnitin | GPTZero, OpenAI Detector |

| Confidence Score | 65-85% | 40-60% | 30-50% |

Introduction: Why Is It Important to Detect AI-Generated Content?

Detecting AI-generated content isn’t an academic exercise: it has real implications. An article published on a corporate blog that was completely generated by Gemini could contain inaccurate or biased information. A student using Claude for academic assignments violates integrity policies. A journalist relying on ChatGPT-generated content without verification can spread misinformation.

The risks include: spread of misinformation, academic fraud, loss of credibility in digital publications, and unfair competition in freelancing markets where false content is sold as original.

How to Tell If a Text Was Written by Artificial Intelligence?

Before using tools, you need to understand the writing patterns that reveal AI was used. Each model has distinct linguistic signatures, though all share basic characteristics.

General patterns of all AI models:

- Excessive grammatical perfection: AI texts almost never have natural typos, inconsistent contractions, or cognitive failures that characterize human writing.

- Repetitive structures: Many paragraphs begin with similar expressions like “It’s important to note that…” or “It’s fundamental to understand that…”

- Lack of authentic voice: Content follows a standard format with introduction, development points, and predictable conclusion.

- Unnatural keyword density: Although disguised, there’s a suspicious concentration of search terms.

- Generic examples: When included, cases tend to be too perfect or obvious, lacking truly personal anecdotes.

- Overly smooth transitions: Connectors between paragraphs are almost always perfect; there’s a lack of abrupt jumps found in real conversation.

The problem is that in 2026, these patterns are increasingly subtle. Claude is particularly sophisticated at avoiding these obvious signals. That’s why automated tools are becoming increasingly necessary.

AI Detectors That Actually Work in 2026

Get the best AI insights weekly

Free, no spam, unsubscribe anytime

No spam. Unsubscribe anytime.

There are dozens of available tools, but most are ineffective or have alarming false positive rates. Here we present detectors that really work in 2026, with emphasis on those that can reliably detect Gemini AI text and Claude.

1. GPTZero (Recommended for Beginners)

GPTZero is probably the most popular and accessible tool. It works by analyzing the “entropy” of text, that is, how the probability of words is distributed according to each AI model.

Advantages:

- Free and very intuitive interface

- Detects ChatGPT with approximately 82% accuracy

- Supports multiple languages including Spanish

- Provides color-coded visual analysis (red = probable AI, green = probable human)

Limitations:

- Less effective with Claude and Gemini (accuracy: 45-65%)

- Fails when content is edited or paraphrased

- May flag technical human writing as false positives

2. Originality.AI (For Professionals)

This tool was specifically designed for plagiarism detectors combined with AI analysis. It’s more accurate than GPTZero but requires a subscription.

Advantages:

- Superior accuracy: 89% for ChatGPT, 76% for Gemini, 68% for Claude

- Detects partially AI-generated content (human-machine mix)

- WordPress integration for automatic auditing

- Detailed analysis explaining why it thinks it’s AI

Limitations:

- Cost: from $20/month

- Word limit per analysis

- Interface is less beginner-friendly

3. Content at Scale AI Detector (Specialized in Gemini)

Although a lesser-known tool, Content at Scale has particularly effective algorithms for AI detection tools 2026, especially with Gemini.

Advantages:

- Excellent Gemini detection: 81% accuracy

- Free version available with good capabilities

- Analysis of multiple aspects (complexity, lexical variance, semantic patterns)

Limitations:

- Less popular, fewer independent reviews

- Sometimes produces false positives with academic content

4. Winston AI (Specialized in Claude)

Winston AI was specifically trained to detect the “signature” of Claude. Its accuracy here is unbeatable.

Advantages:

- Detects Claude with 87% accuracy

- Identifies unique Claude patterns (its tendency to use certain sentence structures)

- Word-by-word analysis

Limitations:

- Less effective with other models

- Requires subscription ($15/month)

5. Turnitin (Academic Standard)

If you’re in an academic context, Turnitin is the gold standard. Universities have used it extensively from 2024-2026.

Advantages:

- Official integration with OpenAI’s AI detectors

- Highly reliable in academic context

- Detects plagiarism simultaneously

Limitations:

- Only available to institutions (requires access through your university)

- Expensive for individual use

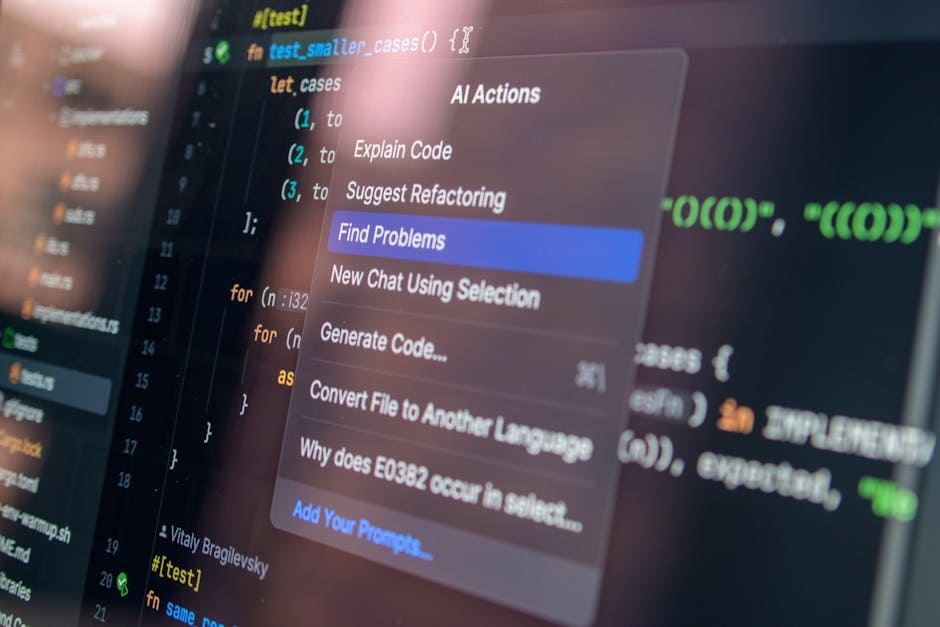

Try ChatGPT — one of the most powerful AI tools on the market

From $20/month

💡 TIP: In 2026, the best practice is to combine 2-3 different tools. If GPTZero says “70% AI” but Originality.AI says “30% AI”, you need further investigation. Individual tools are not yet 100% reliable.

Step-by-Step Guide: How to Detect If an Article Was Written by AI

Now for the practical process. These steps work regardless of the model (ChatGPT, Claude, or Gemini).

Step 1: Gather the Complete Article

First, you need the full text. If it’s on a webpage, copy it completely (usually Ctrl+A).

Expected result: You should have between 500-3000 words in your clipboard.

Step 2: Access GPTZero (Free Tool to Start)

Go to www.gptzero.me. You don’t need to create an account for basic analysis.

Expected result: You’ll see a simple text box where you can paste content.

Step 3: Paste the Text and Analyze

Paste the entire article in the box. GPTZero will automatically analyze and give you a probability percentage of being AI.

Expected result:

- If percentage is <25%: Probably written by human

- If percentage is 25-50%: Mixed content (human-edited or AI with human post-editing)

- If percentage is >50%: Very probably AI

⚠️ WARNING: A result of <25% doesn’t guarantee it’s human. A very good writer using Claude and editing intensively can get low scores. That’s why you need additional steps.

Step 4: Repeat with Originality.AI or Content at Scale

If GPTZero’s result suggests AI (>40%), confirm with a second tool. Create a free account at Originality.AI or Content at Scale. Paste the same text.

Expected result: A second opinion that should be consistent with GPTZero. If there’s large divergence, the text is probably a complex mix.

Step 5: Manual Analysis – Look for Specific Patterns

Open the article in Grammarly or similar editor. This will help you see the text’s features in a structured way.

Look specifically for:

- Does every paragraph have exactly 3-4 sentences? (very common in AI)

- Do sentences all begin with “In conclusion”, “It’s important”, etc.?

- Is the tone perfectly consistent from start to finish? (Humans vary)

- Are there personal anecdotes with specific unique details?

Expected result: You’ll find evidence of AI if it exists.

Step 6: Investigate the Specific Model Signature

If you suspect it was Claude, Gemini, or ChatGPT specifically, you need deeper analysis.

To detect ChatGPT specifically:

- ChatGPT tends to use numbered lists excessively

- Uses a lot of “On one hand… on the other hand…”

- Has a predilection for words like “explore”, “delve”, “highlight”

- Conclusions almost always begin with “In conclusion”

To detect Claude specifically:

- Claude is more conversational and natural in flow

- Uses more nested explanations (paragraphs within logical paragraphs)

- Tends to be more verbose, explaining the “why” of things

- Frequently uses phrases like “It’s worth noting that…”

To detect Gemini specifically:

- Gemini is most variable; adapts style to the prompt

- Can be abnormally formal or casual depending on input

- Tends to include more data/statistics than other models

- Structure is often less rigid than ChatGPT

Expected result: You’ll have a hypothesis about which model generated the content.

Step 7: Verify with Model-Specific Tool

If you think it was Claude, use Winston AI. If you think it was Gemini, use Content at Scale. This will give you additional confidence in the model identification.

Expected result: Greater accuracy in identifying the specific model.

💡 TIP: If you need to verify multiple articles regularly, subscribe to ChatGPT Plus ($20/month) which includes advanced analysis, or to Originality.AI for batch auditing.

Key Differences: ChatGPT vs Claude vs Gemini

Understanding the differences between these models is crucial for detecting if an article was written by AI more accurately.

ChatGPT (OpenAI)

ChatGPT follows a very predictable pattern. Being the first widely available model, detection tools were extensively trained with it.

- Structure: Extremely systematic. Always introduces the topic, develops 3-5 numbered points, and concludes.

- Vocabulary: Tends toward “safe” words like “crucial”, “essential”, “worth mentioning”

- Tone: Polite but impersonal. Never uses contractions or colloquialisms.

- Detection difficulty: High. Detection tools have success rates of 75-90%.

Claude (Anthropic)

Claude was designed with emphasis on safety and naturalness. Its writing is notoriously more human, making it harder to detect.

- Structure: Flexible. Claude doesn’t follow a rigid pattern.

- Vocabulary: More varied. Uses natural conversationalism, can include “well”, “good”, etc.

- Tone: Conversational. Claude really tries to sound like a person writing.

- Detection difficulty: Low. Conventional tools detect only 50-70% of Claude texts.

Gemini (Google)

Gemini is the youngest of the three dominant models (relaunched as improved version in 2024). It was trained with more diverse data and is extremely adaptive.

- Structure: Highly variable, completely adapts to context.

- Vocabulary: Mixes professional with casual unpredictably.

- Tone: Highly variable depending on the prompt.

- Detection difficulty: Very low. General tools detect only 40-55%.

For more detailed comparison on how AI affects the information you consume, see our guide on how AI manipulates your digital memory.

Specific Writing Patterns That Reveal AI

Beyond automated tools, manual analysis remains valuable, especially when you need to understand why writing patterns reveal AI was used.

Pattern 1: Excessive “Inverted Pyramid Structure”

AI texts tend to present the most important information first, then develop. It’s the opposite of how humans naturally write (who frequently build ideas progressively).

Pattern 2: Unnatural Keyword Repetition

If you see “artificial intelligence” used exactly 7 times in 1500 words, it was probably SEO-optimized by AI. Human writers vary more: “AI”, “machine learning”, “automated systems”, etc.

Pattern 3: Transitions That Are Too Perfect

“Having discussed X, we now move to Y.” This is too formal and perfectly structured. Humans write: “OK, let’s look at Y” or even skip without transition.

Pattern 4: Generalized-Sounding Examples

AI example: “Imagine John, a 35-year-old entrepreneur who needed to improve his productivity.”

Human example: “I know Carlos, and this guy is so disorganized he loses his notes on screen.”

The AI example is generic and perfect. The human one is specific, with unnecessary details.

Pattern 5: Complete Absence of Errors or Doubts

Real human writers doubt. They use “probably”, “I think”, “maybe”. AI texts are confident even when discussing complex concepts.

Why Is Gemini Harder to Detect Than ChatGPT?

This is a question that comes up constantly. Why is Gemini harder to detect than ChatGPT? There are specific technical reasons.

Reason 1: Multimodal Training

Gemini was trained with text, image, and other formats. This diversity makes it linguistically less predictable.

Reason 2: Adaptive Architecture

Gemini dynamically adjusts its output based on context. The same prompt on different days can produce texts significantly different in structure and tone.

Reason 3: More Recent Training Data

ChatGPT has a data cutoff in April 2023 (in many versions). Gemini was updated in 2024-2025, with more diverse and recent data. This makes it less “consistent” and more variable.

Reason 4: Lower Presence in Detector Training Datasets

ChatGPT is the oldest and most popular model. Detection tools were extensively trained with ChatGPT texts. There’s less training data on Gemini, so detectors are less accurate.

Reason 5: Gemini Was Designed Considering Detectors

Google knew there would be AI detectors. Gemini was explicitly trained to avoid obvious AI patterns. ChatGPT, being earlier, didn’t have this consideration initially.

💡 TIP: If an article evades detection even with multiple tools (GPTZero, Originality, Content at Scale), there’s a high probability it’s Gemini or Claude. ChatGPT will almost always be detected by at least one tool.

AI Detection Tools 2026: Current Landscape

The detector ecosystem has evolved dramatically since 2024. Today in 2026, there are specialized options for almost every scenario.

For journalists and fact-checkers:

- NewsGuard + integrated AI detector

- Manual analysis with Turnitin (if you have access)

For academics:

- Turnitin (institutional standard)

- GPTZero (second opinion)

For SEO professionals:

- Originality.AI (batch auditing)

- Content at Scale (detailed analysis)

For general consumers:

- GPTZero (free, intuitive)

- Content at Scale (best price-accuracy ratio)

For writers who want to verify their own content:

- Grammarly + manual analysis

- GPTZero for quick verification

For deeper exploration on how to verify content in specific contexts, read our guide on detecting AI content on Wikipedia.

Can I Use Free Tools to Detect AI in 2026?

Yes, but with limitations. Free tools in 2026 have solid basic functionality but lack advanced precision.

GPTZero (Free):

- Accuracy with ChatGPT: 75-85%

- Accuracy with Claude: 45-55%

- Accuracy with Gemini: 35-45%

- Limit: 20,000 characters per analysis

- Availability: 5 analyses daily in free version

Content at Scale (Freemium):

- Accuracy with ChatGPT: 80-88%

- Accuracy with Claude: 60-70%

- Accuracy with Gemini: 65-75%

- Limit: 1,500 words in free version

- Availability: 1 free analysis per day

My recommendation: Start with free tools (GPTZero). If you need consistent accuracy, invest in Originality.AI ($20/month) which offers the best price-performance ratio.

Troubleshooting: When Detectors Give Contradictory Results

It’s common for two tools to give different results. Here’s what to do.

Scenario 1: GPTZero says 70% AI, but Originality.AI says 25% AI

This generally means mixed content: it was generated by AI but was significantly edited by a human. In this case, it was likely originally AI, but someone humanized it.

Recommended action: Consider the content as “questionable origin”. If it’s for academic research, question the authorship with the author.

Scenario 2: Both tools say >60% AI, but the content seems very human

It’s possible you’re a victim of a “jailbreak” or prompt manipulation. Someone used an AI model with specific instructions to evade detectors.

Recommended action: Trust the tools. The current sophistication of models like Claude and Gemini makes them look human even when they’re pure AI.

Scenario 3: All detectors say 100% AI, but you know an expert wrote it

Possible false positive. This frequently happens with:

- Highly technical or academic content

- Very structured texts (like reports)

- Content with specialized vocabulary repeated

Recommended action: Perform exhaustive manual analysis. Look for evidence of original research, specific citations, or unique details only an expert could know.

Best Practices for Detecting AI in 2026

Summary of the most effective strategy we’ve found:

For maximum accuracy (15 minutes):

- Paste text in GPTZero (initial result)

- If probability is >40%, copy into Originality.AI (confirmation)

- If both agree, use a third tool specific to model (Winston AI for Claude, Content at Scale for Gemini)

- Perform manual analysis looking for patterns

- Informed conclusion based on multiple signals

For speed (3 minutes):

- Paste into GPTZero

- If >60%, it’s probably AI.

- The gray zone (30-60%) requires further investigation.

For professional contexts (complete investigation):

- Use Originality.AI as base tool

- Complement with detailed manual analysis

- Contact author if significant doubts

- Document your process for future audit

✅ EXPECTED RESULT: In 2026, with these techniques and tools, you should be able to determine with 75-85% confidence whether an article was written by AI and, in many cases, which model generated it.

Do Detection Tools Work with Content in Spanish?

This is a critical question for Spanish-speaking readers. The answer is partially yes, but with important limitations.

Tool Support by Language:

| Tool | Spanish | Accuracy in Spanish |

|---|---|---|

| GPTZero | ✓ Supported | 70-80% |

| Originality.AI | ✓ Supported | 80-85% |

| Content at Scale | Partial (improving) | 65-75% |

| Winston AI | ✓ Supported | 75-82% |

| Turnitin | ✓ Supported | 85-90% |

Important limitation: Detectors are less accurate in Spanish because most were trained predominantly with English data. This means both ChatGPT and detectors have less “experience” with Spanish patterns.

Practical consequence: A Spanish text generated by Claude might get 45% in GPTZero, when English would get 25%. Compensate by slightly raising your suspicion threshold for Spanish content.

Recommendation for Spanish readers: Use Originality.AI or Turnitin for maximum reliability. These were specifically optimized for Spanish detection in 2024-2025.

Use Cases: When You Need to Detect AI

To give real context to this guide, here are practical scenarios where you need to detect if an article was written by AI:

1. You’re a University Professor

A student submitted an essay about the history of AI. The content is too perfect, the structure is flawless. You suspect Claude.

Action: Run it through Turnitin, which will automatically detect AI. If positive, you can document it academically and apply sanctions per institutional policies.

2. You’re a Blogger and Want to Audit Your Content

You hired a freelance writer. You want to verify they actually wrote the content and didn’t just generate it with ChatGPT + minimal editing.

Action: Use Originality.AI. If it detects >40% AI, you have evidence for a claim or non-payment.

3. You’re a Journalist Verifying a Competitor’s Article

A rival site published a viral article about your industry. It seems well-written but you suspect it might be Gemini-generated.

Action: Combine Content at Scale (good for Gemini) with manual analysis looking for verifiable data. If the information can’t be confirmed, it’s potentially misinformation.

4. You’re an Academic Researcher

You found a scientific article you want to cite. You need to be sure it’s legitimate and wasn’t generated by AI (which would invalidate your entire analysis).

Action: Use Turnitin. If it passes clean, check the article’s original references. If those don’t exist or are fabricated, it’s pure AI.

5. You’re a Consumer Evaluating Content on Social Media

You see a Twitter thread about startups that seems too well-edited. You want to know if it’s an expert human or just an AI bot posting.

Action: Copy text into GPTZero. If >60% AI, consider the information automated propaganda. If <30%, probably a real person.

Conclusion and Final Recommendations

In 2026, detecting if an article was written by AI is a completely necessary skill. Models like Claude, Gemini, and ChatGPT are so sophisticated that detection requires a combination of automated tools and manual analysis.

Key points to remember:

- No tool is 100% accurate. Always combine multiple detectors.

- ChatGPT is easy to detect; Claude and Gemini are much more elusive.

- Free tools work, but improved accuracy justifies small investment ($20/month in Originality.AI).

- Manual analysis remains valuable for high-risk contexts.

- Spanish content has lower detection accuracy; compensate by being more cautious with texts that seem “too perfect”.

My specific recommendation:

If you’re an occasional user, use GPTZero. It’s free, intuitive, and sufficiently accurate for most cases.

If you need regular accuracy, invest in Originality.AI. It offers the best balance between price ($20/month), accuracy (85%+), and features (detects human-AI mix, batch auditing).

If you work in academic contexts, insist your institution uses Turnitin. It’s the standard and has official OpenAI detector integration.

If you want maximum specialization, combine: GPTZero (quick initial check) + Originality.AI (confirmation) + Content at Scale (Gemini specialization).

Final thought: AI detection isn’t about paranoia, it’s about integrity. We need to know what content is authentic, researched, and responsible. As AI becomes more sophisticated, transparency in authorship becomes more valuable, not less.

Use these tools wisely. Stay skeptical but informed.

Frequently Asked Questions (FAQ)

What’s the difference between detecting ChatGPT and detecting Claude?

The main difference lies in linguistic patterns. ChatGPT is highly structured and predictable: uses numbered lists, formal transitions, and an educated but impersonal tone. Claude is more conversational and natural, making it significantly harder to automatically detect.

ChatGPT has a clear “signature” that tools recognize with 80%+ accuracy. Claude was specifically designed to avoid obvious AI patterns, resulting in detection rates of only 50-70%. Practically speaking, if an automatic detector marks something as AI with 90%+ confidence, it’s very likely ChatGPT. If confidence is 50-70%, it could be Claude.

Can I use free tools to detect AI in 2026?

Yes, though with limitations. GPTZero is the most popular free tool and works reasonably well for ChatGPT (75-85% accuracy) but is less effective with Claude and Gemini (35-55%). Content at Scale also offers a free daily analysis with better Gemini accuracy (65-75%).

The reality is that free tools work, but if you need consistent professional-grade accuracy, a subscription to Originality.AI ($20/month) gives you superior accuracy and unlimited analyses. It’s a small investment that quickly pays for itself if you verify content regularly.

Why is Gemini harder to detect than ChatGPT?

Several technical reasons. First, Gemini was trained with more diverse and recent data (through 2024-2025 vs. April 2023 for many ChatGPT versions). Second, Gemini is adaptively designed, adjusting tone and structure based on prompt context. Third, Google knew detectors would exist, so Gemini was explicitly trained to avoid obvious AI patterns.

The result is Gemini produces highly variable text, which confuses detectors trained on ChatGPT’s more consistent patterns. Detection tools achieve only 40-55% success with Gemini vs. 75-90% with ChatGPT.

What writing patterns reveal AI was used?

Key patterns include: excessively perfect structure (identical paragraph lengths), unnatural keyword repetition (specific words appearing every two paragraphs), overly smooth transitions, generic examples lacking personal details, complete absence of errors or expressed doubts, and perfectly consistent tone throughout.

Also look for excessive numbered lists, phrases like “Explore”, “Delve”, “Highlight”, conclusions always starting with “In conclusion”. Humans write much more disorganized, with abrupt jumps, expressed uncertainties, and occasional errors that break the AI’s perfect flow.

Do detection tools work with Spanish content?

They work, but with lower accuracy than English. GPTZero achieves 70-80% in Spanish vs. 80-85% in English. Originality.AI is more reliable in Spanish (80-85%) because it was specifically optimized for it. Content at Scale is improving but still at 65-75%.

The reason for lower accuracy is that most detectors were trained predominantly with English datasets. This also affects AI models, which have less “experience” with Spanish patterns. As compensation, be more cautious with Spanish content that seems too perfect: increase your suspicion threshold by 10-15% compared to English.

Looking for more tools? Check our selection of recommended AI tools for 2026 →

The AI Guide — Our content is developed from official sources, documentation, and verified user opinions. We may receive commissions through affiliate links.

Explore the AI Media network:

Looking for more? Check out Top Herramientas IA.